Thanks Mahnaz Qafari for the post!

Technological advances result in generating an astronomical amount of data. At the same time, it enables us to collect, store, and analyze this data. These all are done far beyond human abilities. Consequently, we as humans depend more and more on these technologies for our daily tasks. For example, we rely on Machine Learning (ML) and Artificial Intelligence (AI) for making or supporting our decisions in many areas such as credit reliability, job recommendations, healthcare, applicant selection process, etc. The more we rely on advanced techniques, the more they influence our lives, and hence, the more concerns would be raised towards their ethical issues such as fairness. When talking about the ethics in ML/AI, you hear trustworthy AI and the term FACT which is an acronym for Fairness, Accountability, Confidentiality, and Transparency where the ultimate goal is having algorithms that ensure FACT by design. However, these criteria are interconnected. In the following, we take a look at a study focusing on the inter-dependencies of these factors.

When a company wants to publish a data which includes sensitive information, they are enforced by legislation (such as EU’s General Data Protection Regulation [2]) to release privacy preserving versions of the data. In [1], the impact of using such a version of the data as input to a critical decision processes on the fairness perspective has been studied. For that, the authors has analyzed the effect of using differential private datasets as input on some resource allocation tasks. Loosely speaking, a dataset guaranties differential privacy if the probability of occurrence of an entry be similar for all possible entries regardless of its participation to the dataset. They have considered two problems:

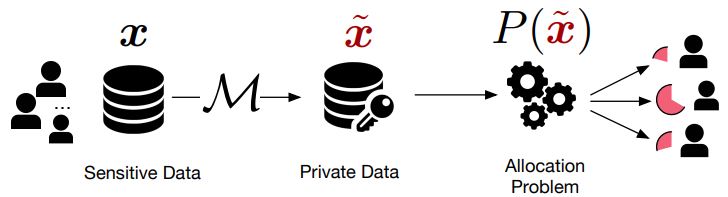

- An allotment problem where the goal is distributing a finite set of resources among some entity. This process is shown in Figure 1 (taken from [3]). An example of such a situation is assigning a number of doctors to the healthcare system of a district.

- A decision rule such as application selection which determines whether some some benefits is granted to some entity.

Figure 1: The private allocation process where x is a dataset, M is an epsilon-differentially private mechanism, hat(x) is the differential private version of x, and P(.) is the allotment function [3].

To characterize the effect on fairness, they have studies the distance between the expected allocation when the privacy preserved version of the dataset has been used as the input and the one where the ground truth has been used. The authors of [1] have shown that the noise added to achieve privacy disproportionately results in granting benefits or privileges to some groups over others. The authors also propose some methods to mitigate this effect:

- The output perturbation approach which suggests randomizing the outputs of the algorithms and not their inputs.

- Linearization by redundant releases which involves modifying the decision problem.

- Modified post-processing approach.

We mention an overview of a study that shows the possibility of significant societal and ethical impacts on individuals caused by using the differential private version of the data as the input to other critical algorithms. It is worth noting that such an effect has been also discussed in [4,5] as well. Here we just mentioned one of the possible impacts of the inter-dependencies of the FACT factors in ML/AI where the effect of a specific privacy-preserving technique has been studied. You are most welcome to comment your thoughts on other possible perspectives of these inter-dependencies, as well as how to prevent them.

References:

[1] https://languages.oup.com/google-dictionary-en/

[2] https://gdpr-info.eu/

[3] Tran, C., Fioretto, F., Van Hentenryck, P., Yao, Z. (2021). Decision making with differential privacy under the fairness lens. In Proceedings of the International Joint Conference on Artificial Intelligence (IJCAI).

[4] https://arxiv.org/abs/2010.04327

[5] Pujol, D., McKenna, R., Kuppam, S., Hay, M., Machanavajjhala, A., Miklau, G. (2020, January). Fair decision making using privacy-protected data. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (pp. 189-199).

Leave a Reply